What causes forgetting? This is perhaps the most central question to memory research and motivated even the earliest memory research, yet remains hotly debated today. One of the oldest theories of memory formation is consolidation theory, dating back to Muller and Pilzecker (1900), over one-hundred years ago. The theory states that memories are vulnerable to forgetting after they have been encoded. Some time after a memory has been encoded it can become consolidated, at which point it becomes resistant to forgetting and can persist for a much longer duration. According to the theory, memories are like plaster in an ever-changing sculpture – it is not until the plaster dries that it becomes a permanent part of the sculpture. While consolidation originally began as an explanation of the protective effects of rest and sleep after learning has occurred, it has gained a considerable foothold in the neuroscience of memory.

Since the original proposals of consolidation theory, however, there have been a number of noteworthy developments in the theorization of memory formation and retrieval. In particular, a number of computational models have been developed that have formalized complete theories of memory, down to encoding, representations, and retrieval processes. Such models have been successful in accounting for an extremely wide range of behavioral phenomena, including forgetting functions, advantages of spaced repetitions, recency advantages, word frequency effects, and proactive and retroactive interference.

Virtually all of the models that have been built in this tradition share one major thing in common: None of them models utilize a consolidation process. Memories are formalized as bindings between items and the contexts in which they occurred –these bindings are added to the contents of memory during learning, but they are left untouched after learning occurs. The fact that such models are able to achieve such breadth without a consolidation process begs the question as to what role it plays. It does not mean that such a process does not exist, but it does undermine its explanatory role in understanding forgetting.

A recent review in Nature Reviews Neuroscience by Andrew Yonelinas, Charan Ranganath, Arne Ekstrom, and Brian Wilgten, made this very point, arguing that the class of contextual-binding models are able to explain everything consolidation can explain and then some!

What consolidation can explain: Retroactive interference and power law forgetting

Muller and Pilzecker (1900) first proposed a consolidation explanation of retroactive interference – the finding that newly learned material interferes with retrieval of older material. They had participants study two lists of items (list 1 and list 2) and manipulated the amount of time that took place between the two lists. Not surprisingly, participants often struggle to recall list 1 items after learning the interfering list 2. You might have good memory for the sentence you’re reading at the moment, but recalling the previous sentence you’ve read might be a bit tricky.

What surprised Muller and Pilzecker is that performance on list 1 improved as the delay between the two lists was increased. Such a result is counter-intuitive when one considers that delays usually harm memory. For this reason, Muller and Pilzecker reasoned that there must be a mechanism that takes place during the delay interval to improve performance on the list 1 items. They reasoned that a process of consolidation was occurring, improving performance on the list 1 items when there was a delay. When there was no delay, consolidation was prevented from occurring by the interfering list 2 material.

The benefits of rest on memory have been replicated extensively and are particularly pronounced during periods of sleep. After learning a study list, participants exhibit better memory performance if they spend the retention interval asleep than if the same period of time was spent awake (Jenkins & Dallenbech, 1924). These findings have led consolidation theorists to argue that sleep is particularly important for consolidation processes to occur.

Finally, consolidation has also been proposed to explain one of the earliest targets of memory theories, namely the shape of the forgetting function. Since the pioneering studies of Herman Ebbinghaus, researchers have tested memory at various retention intervals to quantify the functional form of forgetting. Researchers are nearly unanimous in their support for a power function, where the amount of forgetting is proportional to the amount of time that has elapsed raised to a power, with that power being a decay rate parameter (Rubin & Wenzel, 1996).

Power functions deviate from another classic decay function, the exponential function, in one important way. In the exponential function, the rate of decay is proportional to the current strength of the memory. Exponential functions are common in nature – the rate at which water drips from a leaking bucket is proportional to the amount of water that remains in the bucket.

In power functions, however, the rate of decay is also proportional to how old a memory is. That is, the longer a memory has survived, the more likely it is to continue to survive. In exponential functions, all memories, young and old, decay at the same rate.

But what makes older memories more likely to survive than newer ones? Wixted (2004) has argued that this is also due to the process of consolidation. Older memories are more likely to be consolidated and resistant to future forgetting than newer ones. He also made the connection between the power law and Ribot’s Law of retrograde amnesia, which states that in cases of hippocampal amnesia it is most likely the newer memories that are going to be impaired by hippocampal damage, while many older memories persist.

An alternative account: Memory in context

Yonelinas and colleagues argue that each of these results can be explained by a class of models they refer to as contextual-binding theory. This theory has been implemented in a number of different computational models. It was first formulated by Bill Estes in the 1950’s (Estes, 1955) but was picked up later by Gordon Bower in the early 1970’s as a broader explanation of memory.

The most popular current context-based model is the temporal context model (TCM: Howard & Kahana, 2002), but context features heavily in almost all current computational models, including the SAM (Mensink & Raaijmakers, 1988), REM (Lehman & Malmberg, 2013; Shiffrin & Steyvers, 1997), TODAM2 (Murdock, 1997), and BCDMEM (Dennis & Humphreys, 2001) models. Heck, I’ve even developed a context-based model (Osth & Dennis, 2015). It seems like everyone’s got one nowadays!

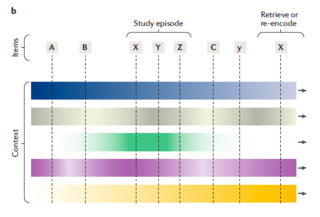

The core idea of the theory is that learning consists of bindings items to a context representation (see the figure below). The context representation consists of both external elements such as the place and setting, but also internal elements such as the cognitive and emotional state of the individual. Critically, these elements change over time, meaning that some items from a learning episode are bound to different contextual elements than others. This is illustrated in the figure below from Yonelinas et al. where contextual elements are arranged as vertical bars – the changes in context are illustrated by the changes in color with respect to time (depicted on the x-axis).

An illustration of context-based models.Source: Yonelinas, A. P., Ranganath, C., Ekstrom, A. D., & Wiltgen, B. J. (2019). A contextual binding theory of episodic memory: Systems consolidation reconsidered. Nature Reviews Neuroscience, 20, 364-375.

The only changes that occur over time are the fluctuations to the active context representation. Item-context bindings are added to memory after learning but are unchanged afterward – the bindings are unaltered or strengthened after learning occurs. It is this property that differentiates such models from consolidation theories, which argue that the learned memories are changed in important ways after learning.

At retrieval, the current state of context is used as a cue (see context state X in the figure). Following the encoding specificity principle of Endel Tulving, memories are more likely to be remembered if their contextual states at encoding match the context employed at retrieval. The contextual state at retrieval is more likely to match the most recent memories (such as Y in the figure), as context has changed very little between the current state and the context states of recently acquired memories. This yields a prediction for one of the oldest findings in memory research – the recency effect.

Another central finding in memory research is that memory benefits incredibly from a spaced repetition schedule. Specifically, if you are free to schedule repetitions in any order you wish, spacing out repetitions over time is much more effective in promoting longer-term learning (Cepeda et al.).

Contextual models also provide a simple explanation of such findings. Because context changes gradually over time, spaced repetitions are more likely to be associated to different states of context (Glenberg, 1976). This produces more “coverage” of the set of contextual elements, leading to a higher probability that one of those context states will match a contextual state in the future. When learning is massed, in contrast, a single state of context is strongly learned, but context may change quickly after that, causing forgetting to occur.

This is but a brief review of the phenomena that context-based models can explain. They can also explain temporal contiguity (Howard & Kahana, 2002) as well as benefits of context reinstatement (Godden & Baddeley, 1975). As I will discuss below, they can also explain several of the hallmark patterns of data that have been assumed to require a consolidation process, but also have led to some novel predictions in these paradigms that consolidation theories have difficulty explaining.

Context and retroactive interference

Context models can explain the retroactive interference data of Muller and Pilzecker (1900). In context-based models, retrieval is competitive – when context is used as a cue, memories that share contextual elements with the current cue all compete to be retrieved. This means that when two memories were studied close together in time, it may be difficult to retrieve both of them due to their similar states of context. In the figure above, one can see that the contextual states of “X” and “Y” are very similar, as there was little opportunity for the contextual states to change between their two presentations that were close together in time.

However, as time passes, each memory will have distinct context states, making them easier to retrieve independently. Consequently, context-based models can explain the finding that longer temporal intervals between List 1 and List 2 in the Muller and Pilzecker paradigm: the List 2 context is sufficiently different from the List 1 context, making it easier to retrieve memories from List 1.

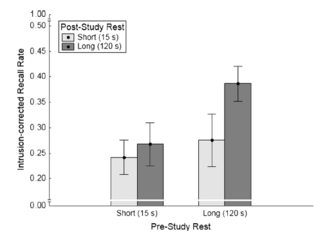

Context-based theories also produce a novel prediction: greater pauses before a relevant episode should promote better memory for the same reason – the memories of interest will have a more distinct state of context, leading to easier retrieval. This prediction was tested by Ecker, Tay, and Brown (2015) who manipulated both pre-study rest and post-study rest. They found that memory benefited from both types of rest equally! Results can be seen in the figure below.

Data from Ecker, Tay, and Brown (2015)Source: Ecker, U. K. H, Tay, J-X., & Brown, G. D. A. (2015). Effects of prestudy and poststudy rest on memory: Support for temporal interference accounts of forgetting. Psychonomic Bulletin & Review, 22, 772-778.

Consolidation theory can explain the benefits of post-study rest, but it cannot explain the benefits of pre-study rest. Such a result does not invalidate consolidation theory, but the fact that the benefit is of comparable magnitude in both conditions and that such results can be easily explained by context models leaves one to wonder what the particular role of consolidation might even be.

Context models also have a simple explanation of the benefits of sleep. If memories are not acquired and stored during sleep, they cannot produce interference. Thus, a simple explanation is that waking periods simply lead to a greater proliferation of interfering memories. This may not explain the entirety of the benefits of sleep, but it does suggest that we do not require consolidation to explain the Jenkins and Dallenbach (1924) results.

Context and forgetting functions

Context can explain forgetting functions in the same manner as they can explain the recency effect: context changes gradually over time, making older memories less likely to match the current state of context at retrieval. However, the rate of forgetting is not linear: if a fixed proportion p of contextual elements is preserved from trial-to-trial, the expected proportion of elements to match after t trials is pt – an exponential function!

This may seem undesirable a priori that such models cannot naturally produce power functions. However, they only produce exponential functions under the circumstance where there is only a single rate of change of the contextual elements. If different elements change at different rates, one can mimic a power function for the simple reason that a power function can be comprised of a number of exponential functions with different decay rates (Anderson & Tweeney, 1997).

This may seem like a bit of a hack, but consider a participant in a list-learning paradigm. There are elements of context that may be changing rapidly, such as their thoughts in response to various words appearing on the screen. However, there are elements that aren’t changing at all, such as their spatial environment. Thus, it is not that implausible a priori that contextual elements may change at different rates.

Moreover, multi-scale context models have been constructed and been successful in accounting for a range of data (Howard, Shankar, Aue, & Criss, 2015; Mozer et al., 2009). While Wixted (2004) pointed out that consolidation can explain power law forgetting, a particularly challenging case is the fact that power functions of forgetting can even be found at very short timescales, including intervals of less than 20 seconds (Donkin & Nosofsky, 2012). Such a timescale is well below the proposed time for consolidation to occur.

Computational Considerations – How might consolidation actually operate?

Up to this point, I’ve been making a similar argument as Yonelinas and colleagues and arguing against consolidation on the grounds of parsimony – existing models can explain the critical data without it, so why bother with it? But I would like to take the argument even further here and ask, how would existing models behave if they contained a consolidation process?

Consolidation sounds intuitively plausible, but an important part of building a theory is specifying how such mechanisms occur rather than merely assuming them. After memories have been encoded, what would be responsible for strengthening them?

Locating and strengthening memories after they have been encoded is more difficult than it sounds. In a neural network, identities of individual memories are “lost” within the connections between neurons after they have been encoded. While consolidation could consist of modification of the connections between neurons, this often has consequences for several memories, and selectively strengthening older memories in such a process would be quite difficult without knowing which connections to modify. How does the brain “know” this?

One possibility is the act of memory retrieval itself, which was implemented in Meeter and Murre’s (2004) TraceLink model, a connectionist implementation of consolidation. The model behaves as a Hopfield network, when it is attempting to perform consolidation a random cue is given to the network. This cue then “settles” into an attractor state formed by a previously learned memory, leading to its retrieval. After retrieval, the active units of the retrieved memory are strengthened by Hebbian learning.

Such a process sounds simple, but there are two important considerations. The first is that the authors found that it was quite easy for runaway consolidation to occur. That is, if a memory gets strengthened through consolidation, it is more likely to be retrieved and strengthened on future consolidation attempts. This produces a rich-get-richer dynamic where a small proportion of memories can reap all of the benefits of consolidation at the expense of the other memories. Meeter and Murre had to augment the dynamics of the network to minimize this possibility by placing restrictions on when and where in the network consolidation can occur.

The second consideration is that they argued that their view of consolidation was restricted to slow-wave sleep. Such a process imposes a particular timescale on forgetting, but this implies that all of the benefits of consolidation should occur during sleep. As mentioned earlier, power functions of forgetting and the amelioration of retroactive interference during delays can not only occur during waking hours, but they can even occur on very short timescales, such as seconds or minutes. Thus, in order for consolidation to be responsible for the benefits of rest and the shape of forgetting functions, it may have to occur at several different time scales. While short-term cellular consolidation processes have been proposed (McGaugh, 2000), it is unclear whether they behave in the same manner as simulations of the TraceLink model.

Conclusions

Yonelinas et al. make a compelling case in favor of context-based models. It is essentially an argument of parsimony – we already have a theory that can explain many of the relevant phenomena a theory should explain, so why postulate additional mechanisms? A similar argument was made earlier by Brown and Lewandowsky (2010), who placed a deeper focus on computational considerations and inspired much of the commentary here.

None of the arguments presented here necessarily mean that consolidation does not happen. However, they do challenge the sufficiency of consolidation in explaining retroactive interference and forgetting functions, as it is clear that memory can benefit from rest before learning and this is something consolidation cannot easily explain. Context models can explain the benefits of rest both before and after learning, but can also explain several other important phenomena in the study of memory, such as benefits of spaced learning and temporal contiguity, making the broader theory of contextual binding a suitable candidate for a unified explanation of memory function.

While context-based models have an impressive and broad coverage of existing empirical phenomena, it is also important to emphasize that our understanding of memory at longer timescales is still particularly weak, as most laboratory studies are focused on time intervals of less than one hour, as this is by far the easiest way to conduct a memory study with undergraduate participants. As more experiments are conducted with rich datasets at longer timescales, we will likely find that our existing models are too impoverished to explain all of the data.

References

Anderson, R. B. & Tweeney, R. D. (1997). Artifactual power curves in forgetting. Memory & Cognition, 25, 724-730.

Cepeda, N. J., Pashler, H., Vul, E., Wixted, J. T., & Rohrer, D. (2006). Distributed practice in verbal recall tasks: A review and quantitative synthesis. Psychological Bulletin, 132, 354–380.

Donkin, C. & Nosofsky, R. M. (2012). A power-law model of psychological memory strength in short- and long-term recognition. Psychological Science, 23, 625-634.

Ecker, U. K. H, Tay, J-X., & Brown, G. D. A. (2015). Effects of prestudy and poststudy rest on memory: Support for temporal interference accounts of forgetting. Psychonomic Bulletin & Review, 22, 772-778.

Estes, W. K. (1955). Statistical theory of spontaneous recovery and regression. Psychological Review, 62, 145–154.

Glenberg, A. M. (1976). Monotonic and nonmonotonic lag effects in paired-associate and recognition memory paradigms. Journal of Verbal Learning and Verbal Behavior, 15, 1–16.

Godden, D. R. & Baddeley, A. D. (1975). Context-dependent memory in two natural environments: On land and underwater. British Journal of Psychology, 66, 325-331.

Howard, M. W. & Kahana, M. J. (2002). A distributed representation of temporal context. Journal of Mathematical Psychology, 46, 268-299.

Howard, M. W., Shankar, K. H., Aue, W. R., & Criss, A. H. (2015). A distributed representation of internal time. Psychological Review, 122, 24–53.

Jenkins, J. B. & Dallenbech, K. M. (1924). Oblivescence during sleep and waking. American Journal of Psychology, 35, 605-612.

Lehman, M. & Malmberg, K. J. (2013). A buffer model of memory encoding and temporal correlations in retrieval. Psychological Review, 120, 155-189.

McGaugh, J. L. (2000). Memory – A century of consolidation. Science, 287, 248-251.

Meeter, M. & Murre, J. M. J. (2004). Consolidation of long-term memory: Evidence and alternatives. Psychological Bulletin, 130, 843-857.

Mensink, G. J., & Raaijmakers, J. G. W. (1988). A model for interference and forgetting. Psychological Review, 95, 434–455.

Mozer, M. C., Pashler, H., Cepeda, N., Lindsey, R., & Vul, E. (2009). Predicting the optimal spacing of study: A multiscale context model of memory. In Y. Bengio, D. Schuurmans, J. Lafferty, C. K. I. Williams, & A. Culotta (Eds.), Advances in neural information processing systems 22 (pp. 1321–1329). La Jolla, CA: NIPS Foundation.

Muller, G. E. & Pilzecker, A. (1900). Experimentelle Beitrage zur Lehre vom Gedachtnis [Expeirmental contributions to the science of memory]. Z. Psycholog. Erganz, 1, 1-300.

Murdock, B. B. (1997). Context and mediators in a theory of distributed associative memory (TODAM2). Psychological Review, 104, 839–862.

Osth, A. F. & Dennis, S. (2015). Sources of interference in item and associative recognition. Psychological Review, 122, 260-311.

Rubin, D. C. & Wenzel, A. E. (1996). One hundred years of forgetting: A quantitative description of retention. Psychological Review, 103, 734-760.

Shiffrin, R. M., & Steyvers, M. (1997). A model for recognition memory: REM – retrieving effectively from memory. Psychonomic Bulletin & Review, 4, 145–166.

Wixted, J. T. (2004). The psychology and neuroscience of forgetting. Annual Review of Psychology, 55, 235-269.

Yonelinas, A. P., Ranganath, C., Ekstrom, A. D., & Wiltgen, B. J. (2019). A contextual binding theory of episodic memory: Systems consolidation reconsidered. Nature Reviews Neuroscience, 20, 364-375.

Hi Adam,

I very much like the paper and would like to cite it in a paper. Can you give me the full reference?

Many thanks,

John Antrobus

LikeLike

It’s in the references section, but here it is again:

Yonelinas, A. P., Ranganath, C., Ekstrom, A. D., & Wiltgen, B. J. (2019). A contextual binding theory of episodic memory: Systems consolidation reconsidered. Nature Reviews Neuroscience, 20, 364-375.

LikeLike